Flipping the AI coin

TLDR

This is not another "AI + Web3" rosy VC writeup. We're optimistic about merging both technologies, but the text below is a call to arms. Otherwise the optimism won't end up justified.

Why? Because developing and running best AI models requires significant capital expenditures in the most cutting-edge and often hard-to-get hardware as well as very domain-specific R&D. Slapping crypto incentives to crowdsource these, as most Web3 AI projects are doing, is not enough to outweigh tens of billions of dollars poured in by large corporations that control AI development in a firm grip. Given hardware constraints, this may be the first big software paradigm that smart and creative engineers outside of incumbent organizations have no resources to disrupt.

Software is "eating the world" faster and faster, soon bound to take off exponentially with the AI acceleration. And all this "cake", with how things currently stand, is going to the tech incumbents - while end users, including governments and big businesses, let alone consumers, become even more beholden to their power.

Incentive Misalignment

All of this could not have unfolded in a more unfitting time - with 90% of decentralized web participants busy chasing the golden goose of easy fiat gains of narrative-driven development. Yes, developers are following the investors in our industry and not the other way around. It varies from open admittance to more subtle, subconscious motivation, but the narratives and the markets forming around them drive a lot of decision making in Web3. The participants are too engulfed in a classic reflexive bubble to notice the world outside, except for narratives that help advance this cycle further. And AI is obviously the biggest one, since it's also undergoing a boom of its own.

We have spoken with dozens of teams in the AI x Crypto intersection and can confirm that many of them are very capable, mission-driven and passionate builders. But such is human nature that when confronted with temptations we tend to succumb to them and then rationalize those choices post-factum.

Easy path to liquidity has been a historic curse of the crypto industry - responsible for slowing down its development and useful adoption by years at this point. It diverts even the most faithful crypto disciples towards "pumping the token". The rationalization is that with more capital at hand in the form of tokens those builders may have better chances.

Relatively low sophistication of both institutional and retail capital creates opportunities for builders to make claims detached from reality while still benefiting from valuations as if those claims already came to fruition. The outcome of these processes is an actually entrenched moral hazard and capital destruction, with very few of such strategies working out over the long term. Necessity is the mother of all inventions and when it's gone, so are the inventions.

It couldn't have happened at a worse time. While all of the smartest tech entrepreneurs, state actors and enterprises, big and small, are racing to ensure their portion of benefits coming from the AI revolution, crypto founders and investors are opting out for a "quick 10x". Instead of a lifetime of 1000 x's, which is the real opportunity cost here, in our view.

a Rough Summary of Web3 AI Landscape

Given the above-mentioned incentives, Web3 AI project taxonomy actually comes down to:

- Legitimate (also sub-divided between realists and idealists)

- Semi-legitimate, and

- Fakers

Basically, we think that builders know exactly what it takes to keep up with their Web2 competition and the verticals where it's actually possible to compete and where it's more of a pipe dream, which is nevertheless pushable to VCs and unsophisticated public.

The goal is to be able to compete here and now. Otherwise the speed of AI development may leave Web3 behind while the world leap-frogs to the dystopian Web4 of Corporate AI in the West going vs State AI of China. Those who can't be competitive soon enough and rely on distributed tech to catch up over a longer time horizon are too optimistic to be taken seriously.

Obviously, this is a very rough generalization and even the faker group contains at least a few serious teams (and maybe more of just delusional dreamers). But this text is a call to arms, so we don't intend to be objective, but rather call to the reader's sense of urgency.

Legitimate:

- Middleware for "bringing AI on-chain". Founders behind such solutions, which aren't many, understand that decentralized training or inference of models users actually want (the cutting-edge) is unfeasible if not impossible at the moment. So finding a way to connect best centralized models with the on-chain environment to let it benefit from sophisticated automation is a good enough first step for them. Hardware enclaves of TEEs ("air-gapped" processors) that can host API access points, 2-sided oracles (to index on and off-chain data bidirectionally) and provide verifiable off-chain compute environments for the agents, seem to be the best solution at the moment. There are also co-processor architectures that use zero-knowledge-proofs (ZKPs) for snapshotting state changes (rather than verifying full computation) that we also find feasible in the midterm.

The more idealistic approach to the same problem tries to verify the off-chain inference in order to bring it on par with on-chain computation in terms of trust assumptions. The goal of this should be to allow AI to perform tasks on- and off-chain as in a single coherent runtime environment, in our view. However most of the inference verifiability proponents talk about "trusting the model weights" and other hairy goals of the same kind that actually become relevant in years if ever. Recently founders in this camp started exploring alternative approaches to inference verifiability, but originally it was all ZKP-based. While a lot of brainy teams are working on ZKML, as it became to be known, they are taking too big of a risk by anticipating that crypto optimizations outpace complexity and compute-requirements of AI models. We thus deem them unfit for competition, at least for now. Yet, some recent progress is interesting and shouldn't be ignored.

Semi-legitimate:

- Consumer apps that use wrappers around close- and open-source models (e.g. Stable Diffusion or Midjourney for image generation). Some of these teams are first to market and have actual user traction. So it's not fair to blanketly call them phony, but there's only a handful that are thinking deeply about how to both evolve their underlying models in decentralized fashion and innovate in incentive design. There are some interesting governance/ownership spins on the token component here and there. But majority of the projects in this category just slap a token at an otherwise centralized wrapper on top of e.g. OpenAI API to get a valuation premium or faster liquidity for the team.

What none of the two camps above address is training and inference for big models in decentralized settings. Right now there is no way to train a foundational model in reasonable time without relying on tightly-connected hardware clusters. "Reasonable time" given the competition level is the key factor.

Some promising research on the topic has come out recently and, theoretically, approaches like Differential Data Flow may be expanded to distributed compute networks to boost their capacity in the future (as networking capabilities catch up with data stream requirements). But competitive model training still requires communication between localized clusters, rather than single distributed devices, and cutting-edge compute (retail GPUs are becoming increasingly uncompetitive).

Research into localizing (one of the two ways of decentralizing) inference by shrinking model size has also been progressing recently, but there are no existing protocols in Web3 leveraging it.

The issues with decentralized training and inference logically bring us to the last of the three camps and by far the most important one and hence so emotionally triggering for us ;-)

Fakers:

- Infrastructure applications mostly in the decentralized server space, offering either bare-bone hardware or also decentralized model training/hosting environments. There are also software infrastructure projects that are pushing protocols for e.g. federated learning (decentralized model training) or those that combine both the software and hardware components into a single platform where one can essentially train and deploy their decentralized model end-to-end. Majority of them lack the sophistication required to actually address stated problems and the naive "token incentive + market tailwind" thinking prevails here. None of the solutions we've seen both in the public and private markets come close to meaningful competition here and now. Some may evolve into workable (but niche) offerings, but we need something fresh and competitive here and now. And it can only happen through innovative design that addresses distributed compute bottlenecks. In training not only the speed, but also verifiability of work done and coordination of training workloads is a big problem, which adds to the bandwidth bottleneck.

We need a set of competitive and truly decentralized foundational models and they require decentralized training and inference to work. Losing AI may completely negate any and all achievements "decentralized world computers" have made since the advent of Ethereum. If computers become AI and AI is centralized there will be no world computer to speak of other than some dystopian version of that.

Training and inference are the heart of AI innovation. When the rest of the AI world is moving towards more tightly-knit architectures, Web3 needs some orthogonal solution to compete, because competing head on is becoming less feasible very fast.

Size of The Problem

It's all about compute. The more you throw at both the training and inference, the better your results. Yes, there are tweaks and optimizations here and there and compute itself is not homogeneous - there's now a whole variety of new approaches to overcome the bottlenecks of traditional Von Neumann architecture for processing units - but still it all comes down to how many matrix multiplications you can do over how big of a memory chunk and how fast.

That's why we are witnessing such a strong build-out on the data center front by the so-called "Hyperscalers", which are all looking to create a full stack with an AI model powerhouse at the top and hardware that powers it underneath: OpenAI(models)+Microsoft(compute), Anthropic(models)+AWS(compute), Google (both) and Meta (increasingly both via doubling down on own data center buildout). There's more nuance, interplay dynamics and parties involved, but we'll leave it out. The big picture is Hyperscalers are investing billions of dollars, like never before, into the data center buildout and are creating synergies between their compute and AI offerings, expected to yield massively as AI proliferates across the global economy.

Lets just look at the level of build-out expected this year alone from the 4 companies:

- Meta anticipates $30-37bn capital expenditures in 2024, which is likely to be heavily skewed towards data centers.

- Microsoft spent around $11.5bn in 2023 on CapEx and is widely rumored to invest another $40-50bn in '24-'25! This can be partially inferred by enormous data center investments being announced in just a few single countries: $3.2b in UK,$3.5bn in Australia, $2.1bn in Spain, €3.2bn in Germany, $1bn in the American state of Georgia and $10bn in Wisconsin, respectively. And those are just some of the regional investments from their network of 300 data centers spanning 60+ regions. There are also talks of a supercomputer for OpenAI that may cost Microsoft another $100bn!

- Amazon leadership expects their CapEx to grow significantly in 2024 off of $48bn they spent in 2023, driven primarily by the expansio of the AWS infrastructure buildout for AI.

- Google spent $11bn to scale up its servers and data centers in Q4 of 2023 alone. They admit those investments were made to meet expected AI demand and are anticipating the rate and total size of their infrastructure spend to increase significantly in 2024 due to AI.

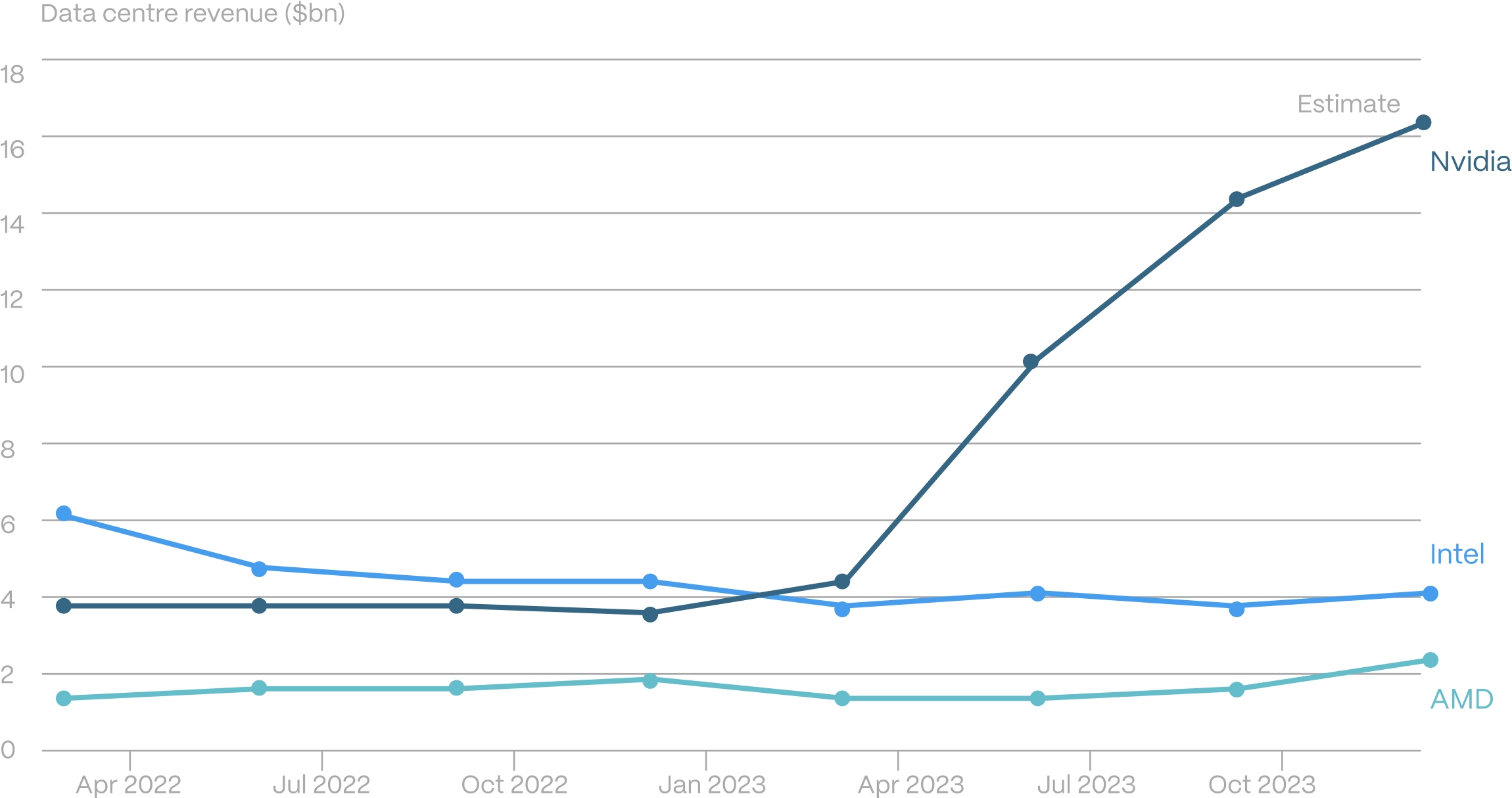

And this is how much was spent on NVIDIA AI hardware already in 2023:

Source: Company reports. Nvidia’s 2023 figures are for fiscal year ending Jan 2024

Source: Company reports. Nvidia’s 2023 figures are for fiscal year ending Jan 2024

Jensen Huang, CEO of NVIDIA, has been pitching a total of $1tn to be poured into AI acceleration in the next few years. A prediction he recently doubled to $2tn, allegedly prompted by the interest he witnessed from the sovereign players. Analysts at Altimeter expect $160bn and over $200bn in AI-related datacenter spend globally in '24 and '25 respectively.

Now to compare these numbers with what Web3 has to offer independent data center operators to incent them to expand CapEx on latest AI hardware:

- The total market capitalization of all Decentralized Physical Infrastructure (DePIn) projects is currently sitting at around $40bn in relatively illiquid and predominantly speculative tokens. Essentially, market caps of these networks are equal to the upper bound estimate of the total CapEx of their contributors, given they incentivize this buildout with the tokens. Yet, current mcap is almost no use, given it's already been issued.

- So, let's then assume there is another $80bn (2x the existing value) of both private and public DePIn token capitalizations hitting the market as incentives in the next 3-5 years and assume this is 100% going towards AI use cases.

Even if we take this very rough estimate divided by 3 (years) and compare the dollar value of that with the hard cash spent by just the Hyperscalers in 2024 alone, it is clear that slapping token incentives onto a bunch of "decentralized GPU network" projects is not enough.

There also needs to be billions of dollars worth of investor demand to absorb these tokens, as the operators of such networks sell big chunk of thus mined coins to cover the significant costs of Cap- and OpEX. And some billions more to push those tokens higher and incentivise growth in the build-out to outcompete Hyperscalers.

Yet, someone with intimate knowledge of how most of Web3 servers are currently run may expect a big portion of the "Decentralized Physical Infrastructure" to actually run on those same Hyperscalers' cloud services. And, of course, the spike in GPU and other AI-specialized hardware demand is driving more supply, which eventually should make cloud-renting or buying it much cheaper. At least that is the expectation.

But also consider this: right now NVIDIA needs to prioritize clients for its latest gen GPUs. It is also beginning to compete with the biggest cloud providers on their own turf - offering AI platform services to enterprise clients already locked into those Hyperscalers. This eventually incentivizes it to either build out its own data centers over time (essentially eating into fat profit margins they are enjoying right now, hence less likely) or significantly limit their AI hardware sales to just their partnership network cloud providers.

Also, competitors to NVIDIA coming out with additional AI-specialized hardware are mostly using the same chips as NVIDIA, produced by TSMC. So essentially all AI hardware companies are currently competing for TSMC's capacity. TSMC as well needs to prioritize certain customers over others. Samsung and potentially Intel (which is trying to get back into state-of-the-art chip fabrication for its own hardware soon) may be able to absorb extra demand, but TSMC is producing the majority of AI-related chips at the moment and scaling and calibrating cutting edge chip fabrication (3 and 2 nano meters) takes years.

On top of that all of the cutting-edge chip fabrication at the moment is done next to the Taiwan Strait by TSMC in Taiwan and Samsung in South Korea, where a risk of a military conflict can materialize before the facilities currently built in the US to offset this (and also not expected to produce the next gen chips for a few more years) may be launched.

And finally, China, which is essentially cut off from latest generation AI hardware due to restrictions imposed on NVIDIA and TSMC by the US, is competing for whatever compute is remaining available, just as the Web3 DePIn networks are. As opposed to Web3, Chinese companies actually do have their own competitive models, especially the LLMs from e.g. Baidu and Alibaba, which require a lot of the previous generation devices to run.

So there is a non-immaterial risk that due to one of the reasons stated above or confluence of factors Hyperscalers just limit access to their AI hardware to external parties as AI domination war intensifies and takes priority over cloud business. Basically it's a scenario where they take up all of AI-related cloud capacity for their own usage and no longer offer it to anyone else, while also gobbling up all of the newest hardware. This happens and the remaining compute supply goes into even higher demand by other big players, including sovereigns. All while the consumer grade GPUs left out there are getting increasingly less competitive.

Obviously, this is an extreme scenario, but the prize is too big for the big players to back down in case hardware bottlenecks remain. This leaves decentralized operators like tier 2 data centers and retail-grade hardware owners, who make up majority of Web3 DePIn providers, left out of competition.

The Other Side of The Coin

While the crypto founders are asleep at the wheel, the AI heavy-hitters are watching crypto closely. Government pressures and competition may drive them towards adopting crypto in order to avoid getting shut down or heavily regulated.

Stability AI founder recently stepping down in order to start "decentralizing" his company is one of the first public hints at that. He had previously made no secret of his plans to launch a token in public appearances, but only after the successful completion of the company's IPO - which sort of gives out the real motives behind the anticipated move.

In the same vein, while Sam Altman is not operationally involved with the crypto project he co-founded, Worldcoin, its token certainly trades like a proxy to OpenAI. Whether there is a path to connect the free internet money project with the AI R&D project only time will tell, but the Worldcoin team seems to also acknowledge that the market is testing this hypothesis.

It makes a lot of sense to us that AI giants may explore different paths to decentralization. The problem we see here again is that Web3 has not produced meaningful solutions. "Governance tokens" are a meme for the most part, while only the ones that explicitly avoid direct ties between asset holders and their network's development and operations - $BTC and $ETH - are the truly decentralized ones at the moment.

Same (dis)incentives that slow down technological development also affect development of different designs for governing crypto networks. Startup teams just slap a "governance token" on top of their product in hopes to figure it out as they gather momentum, while eventually just getting entrenched in the "governance theater" around resource allocation.

Conclusion

The AI race is on and everyone is very serious about it. We cannot identify a flaw in the big tech incumbents' thinking when it comes to scaling their compute at unprecedented rates - more compute means better AI, better AI means cutting costs, adding new revenue and expanding the market share. This means to us that the bubble is justified, but all the fakers will still get washed out in inevitable shakeouts ahead.

Centralized big corporate AI is dominating the field and legitimate startups find it hard to keep up. Web3 space has been late to the party but is also joining the race. The market is rewarding crypto AI projects too richly compared to the Web2 startups in the space, which diverts the founders interests from shipping product to pumping the token at a critical juncture when the window of opportunity to catch up is closing fast. So far there hasn't been any orthogonal innovation here that circumvents expanding compute to massive scale in order to compete.

There is a credible open source movement now around consumer-facing models, which was originally pushed forward with just some centralized players opting to compete with bigger closed-source rivals for market share (e.g. Meta, Stability AI). But now the community is catching up and putting pressure on the leading AI firms. These pressures will continue to affect the closed-source development of AI products, but not in a meaningful way until open source is on the catching up side. This is another big opportunity for the Web3 space, but only if it solves for decentralized model training and inference.

So, while on the surface "classical" openings for disruptors are present, the reality couldn't be further from favoring them. AI is predominantly tied to compute and there's nothing that can be changed about it absent breakthrough innovation in the next 3-5 years, which is a crucial period for determining who controls and steers AI development.

Compute market itself, even though demand is boosting efforts on the supply side, cannot "let hundred flowers bloom" either with the competition between manufacturers constrained by structural factors like chip fabrication and economies of scale.

We remain optimistic about human ingenuity and are certain there's enough smart and noble people to try to crack the AI problem space in a way that favors the free world over top-down corporate or government control. But the odds are looking very slim and it's a coin toss at best, but Web3 founders are too busy flipping the coin for financial rather than real world impact.

If you are building something cool to help increase Web3's chances and aren't just riding the hype wave, hit us up.